Yingsi Qin

I am a final-year PhD candidate in Electrical and Computer Engineering at Carnegie Mellon University, advised by Prof. Aswin Sankaranarayanan and Prof. Matthew O'Toole. I am part of the Image Science Lab and Carnegie Mellon Computational Imaging.

I obtained my B.S. in Computer Science from Columbia University, focused on Artificial Intelligence, and my B.A. in Physics from Colgate University. I have interned at Meta Reality Labs Display Systems Research (2024, 2025), Snap Research Computational Imaging (2020), and Google Search (2019).

Research Interests

I am broadly interested in embedding fundamental physical laws, such as complex light transport and 3D geometry, into computational frameworks and generative algorithms to better understand and interact with the visual world. My research interests are at the intersection of computer vision, computational imaging, 3D perception, signal processing, optics, and machine learning.

News

- [Feb. 2026] I gave a talk at Johns Hopkins University CCVL Seminar. Thanks for the invite!

- [Jan. 2026] I gave a talk at Cohere Labs. Thanks for the invite!

- [Dec. 2025] I gave a talk at CMU Computer Vision Reading Group. Thanks for the invite!

- [Nov. 2025] I gave a talk at MIT Quantum Photonics. Thanks Dirk for having me!

- [Oct. 2025] Our paper SVAF won the Best Paper Honorable Mention Award at ICCV 2025!

- [Oct. 2025] I gave a talk for SVAF at ICCV 2025. Recordings available. Thanks for the invite!

- [Oct. 2025] We demoed our real-time prototype for SVAF at ICCV 2025!

- [Jul. 2025] Our paper Spatially-Varying Autofocus (SVAF) is accepted to ICCV 2025!

- [May. 2025] I started my research scientist internship at Meta Reality Labs Research!

- [Feb. 2025] Grateful to be awarded the James Sprague Presidential Fellowship at CMU!

Selected Publications

-

Conference

Conference

Conference

ACM SIGGRAPH 2023 Emerging Technologies

Conference

ACM SIGGRAPH 2023 Emerging Technologies

Journal

Journal

Journal

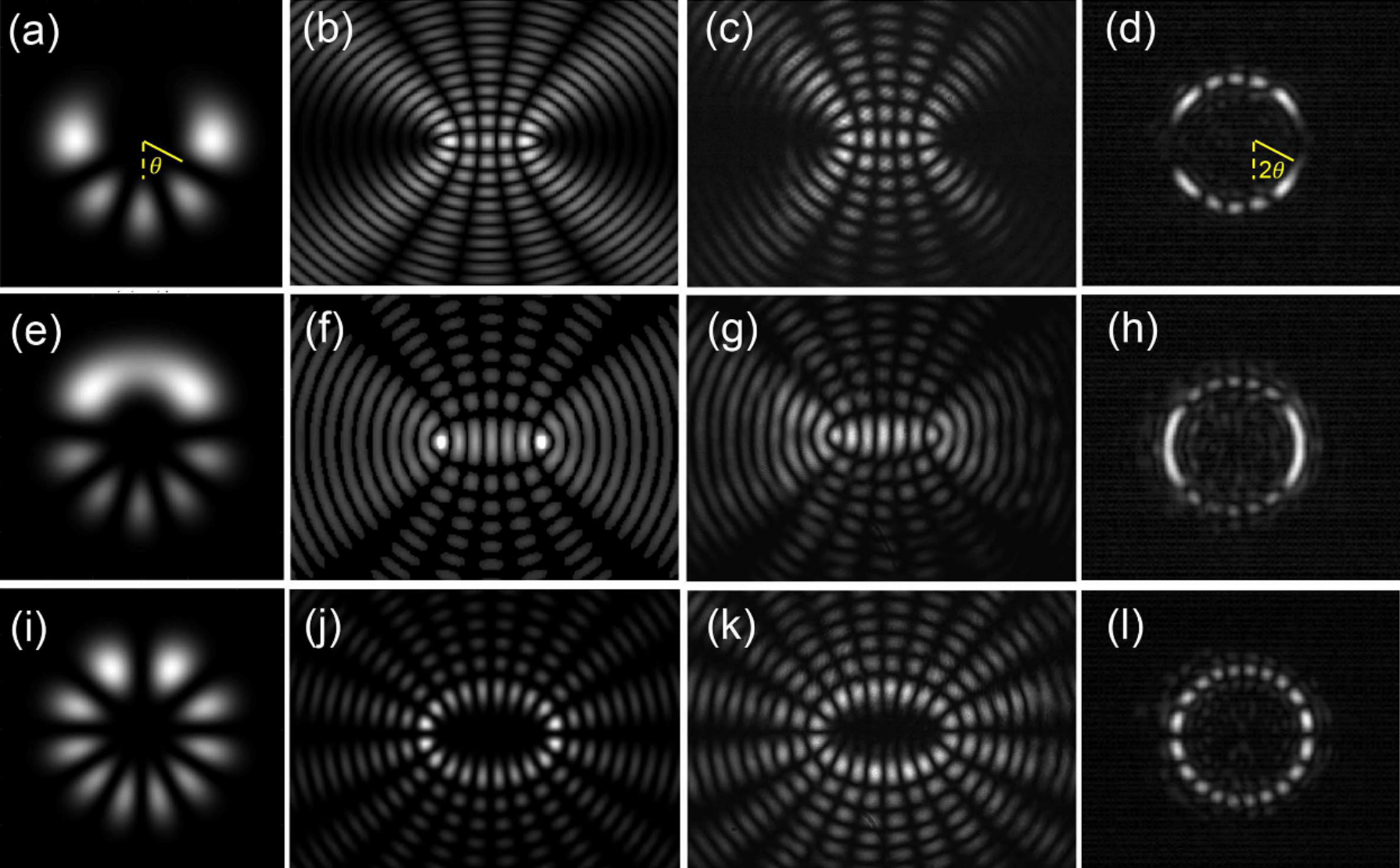

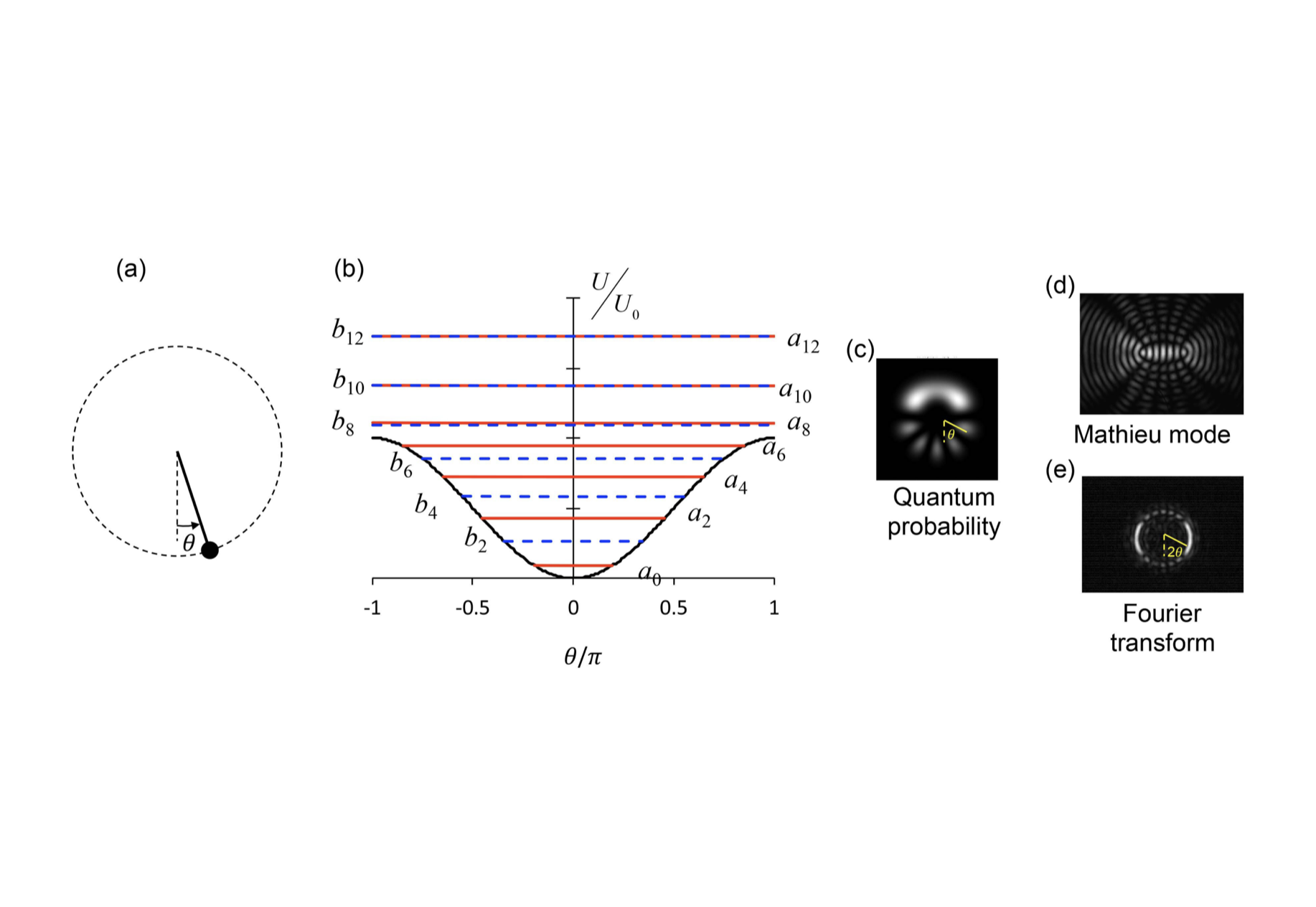

Journal of Optics 2021

Journal

Journal of Optics 2021

Conference

Frontiers in Optics 2019

Conference

Frontiers in Optics 2019

The full list of publications is on my google scholar page.

Selected Talks

-

SIGGRAPH 2023Technical Paper Talk [recordings]Split-Lohmann Multifocal Displays

August 2023 in Los Angeles, CA

SIGGRAPH 202320-Second Fast Forward [recordings]Split-Lohmann Multifocal DisplaysAugust 2023 in Los Angeles, CA

TechBeat 人工智能社区30-Minute Talk in Mandarin [recordings]Split-Lohmann Multifocal DisplaysOctober 2023 Remote

Other Invited Talks and Demos

- [Feb. 2026] Talk: "Spatially Programmable Lensing"

@ Johns Hopkins University CCVL Seminar, Remote - [Jan. 2026] Talk: "Spatially-Varying Autofocus"

@ Cohere Labs, Remote - [Dec. 2025] Talk: "Spatially Programmable Lensing"

@ CMU Computer Vision Reading Group, Pittsburgh, PA - [Nov. 2025] Talk: "Spatially Programmable Lensing"

@ MIT Optics and Quantum Electronics Seminar, hosted by Dirk Englund, Cambridge, MA - [Oct. 2025] Demo: "Spatially-Varying Autofocus", 2-hour session

@ ICCV 2025, Honolulu, HI - [Jul. 2025] Demo: "Spatially-Varying Autofocus", 2-hour session

@ ICCP 2025, Toronto, Canada

Services

Reviewer

ACM Transactions on Graphics (TOG)IEEE Transactions on Visualization and Computer Graphics (TVCG)

IEEE Transactions on Computational Imaging (TCI)

Optics Express

SIGGRAPH

International Conference on Computer Vision (ICCV)

Computer Vision and Pattern Recognition (CVPR)

European Conference on Computer Vision (ECCV)

Association for the Advancement of Artificial Intelligence (AAAI)

Teaching

- Teaching Assistant, Introduction to Deep Learning, Carnegie Mellon University, Spring 2025

- Teaching Assistant, Signals and Systems, Carnegie Mellon University, Spring 2021

- Peer Mentor, Engineering Student Council, Columbia University, 2019-2020

- Teaching Assistant, Data Structures and Algorithms, Colgate University, 2017-2019

- Teaching Assistant, Introduction to Electricity and Magnetism, Colgate University, Spring 2018

Selected Press Coverage

Spatially-Varying Autofocus

Split-Lohmann Multifocal Displays

Projects

-

Project for COMS 6735 Visual Databases at Columbia, Spring 2021

Project for COMS 6998 Quantum Computing Theory and Practice at Columbia, Spring 2020

Project for COMS 6998 Computation and the Brain at Columbia, Fall 2020

Project for PHYS 451 Computational Mechanics at Colgate, Fall 2018

Project for PHYS 336 Electronics at Colgate, Spring 2018

Paragliding

I fly as a paragliding pilot. I soar xc among mountains, over forests, across rivers, and near oceans.